Escaping Plato’s Cave: Telecom Metadata to save LLMs

Towards Experiential AI with CPNI

Author: Sunil Daluvoy (AutoAmerican-Research)

Date: August 2025

Abstract

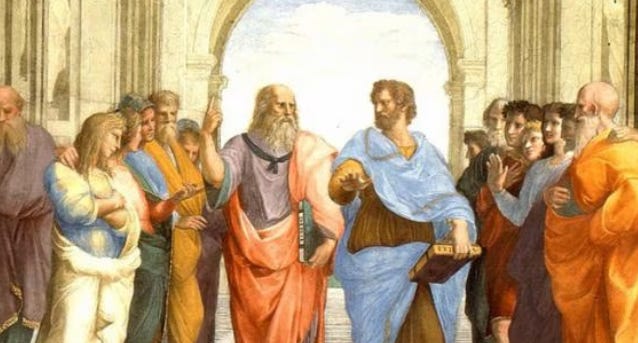

Large Language Models (LLMs) trained on web-scale text are like prisoners in Plato’s Cave: they see only the shadows of human experience expressed through language. These shadows allow mimicry but not direct engagement with outcomes. To achieve true grounding, AI must combine inherited knowledge, the compressed result of human cultural and evolutionary history, with experiential signals from real-world data. Customer Proprietary Network Information (CPNI), such as call records, tower handoffs, and data usage, provides this experiential foundation: passively gathered, precisely timed, and linked to outcomes at a massive scale. At the same time, much of humanity’s knowledge remains locked in proprietary datasets, such as medical records, enterprise logs, and institutional research, which could expand LLMs’ listening capabilities.

This paper presents a framework for training with CPNI natively, situating it within the duality of inheritance and experience. We argue that combining privacy-protected CPNI with governance-enabled access to institutional knowledge fulfills the insight of the Bitter Lesson, which holds that scalable intelligence favors general methods and computation (Sutton, 2019). More recently, Trask (2025) has extended this argument, showing that inheritance of accumulated human knowledge provides extraordinary computational efficiency and should be fused with experiential learning. In this way, CPNI helps AI move beyond shadow imitation toward experiential understanding, answering Sutton’s call for agents that adapt based on real-world feedback. Together, inheritance and experience can help AI leave the cave and advance toward robust, agentic, and ambient intelligence.

1. Introduction

Plato’s allegory of the cave depicts humans chained in darkness, able to see only shadows on a wall and confusing those shadows with reality. LLMs, as they are currently trained, operate in a similar way: they are confined to the shadows of web text. These models excel at predicting which words should follow in a sequence but lack direct real-world experience. They can tell us what someone might say about a commute, but not what actually happens when millions of people move through cities each morning. They can generate fluent conversations, yet they remain blind to actual outcomes, unable to be “surprised” or to adapt when the world doesn’t match their predictions.

Large Language Models (LLMs) have advanced quickly through scaling laws such as Chinchilla (Hoffmann et al., 2022) and Farseer (Li et al., 2025), driven by increasingly large web corpora. However, as foundation models converge on standardized internet texts, the marginal value of more crawling has diminished. This motivates the discovery of new, high-value data sources.

Richard Sutton argues that true intelligence requires experiential learning: acting in the world, observing outcomes, and adjusting accordingly. Yet, as Andrew Trask observes, discarding inherited human knowledge entirely would require re-running the 10^50 scale computations of biological evolution. Every sentence humans write encodes the compressed output of millions of years of evolutionary optimization. The lesson is not to choose between inheritance and experience, but to combine them.

CPNI provides the experiential half of this equation: outcome-linked behavioral data that grounds models in lived reality. Proprietary institutional datasets provide the inheritance half: validated human knowledge far beyond what the public internet provides in text. Together, they offer a path out of the cave, linking what people say with what they do, while standing on the shoulders of accumulated civilization.

CPNI for AI?

This paper presents a framework for CPNI native LLM training that integrates technical, business, and legal considerations. Unlike platform-specific app telemetry or retrospective web text, CPNI captures ongoing behavior. Models can be updated daily, forming feedback loops that enable AI interventions to generate new behavioral data for ongoing training and refinement. We tackle practical challenges, such as fragmented telecom data, low-latency requirements for ambient AI, and the balance between privacy and utility. Additionally, we measure business value, such as a 20–40% boost in support containment from AI-powered automation [5]. Incorporating compliance as a system feature helps reduce risks, such as FCC enforcement with severe penalties [6], and aligns with upcoming AI regulations.

This work also addresses fundamental critiques of LLMs. As Richard Sutton noted recently, current models imitate human language without building world models or learning from outcomes. They predict “what a person would say,” not “what will actually happen” [7]. Trained on static corpora, they lack goals linked to real-world reward signals and cannot adapt when predictions are wrong [8][9]. Sutton stresses that intelligence requires experience: acting, observing results, and adjusting accordingly [10]. AI experts, such as @teortaxesTex, argue on X that current LLMs develop fragile “language-world models” that are likely to break when faced with novelty.

CPNI directly tackles these gaps by shifting training from words to actions. Instead of just reading that “many people commute at 8 AM,” a model trained on CPNI observes billions of real commutes through tower handoffs and data spikes, allowing it to predict and respond to these patterns. CPNI incorporates outcome signals, such as call drop rates and throughput, that serve as rewards for ongoing fine-tuning. By including this experiential ground truth, LLMs can develop more robust models that predict not only the next word but the next event in a person’s day. As Sutton suggests, CPNI can help usher in an era of experience for AI [12].

Our contributions are threefold: we establish CPNI as the behavioral equivalent of web text for training LLMs; outline legal and technical safeguards that integrate privacy and compliance by design; and demonstrate through synthetic benchmarks how models can effectively predict behavior while safeguarding privacy. We demonstrate that telecom carriers, when they responsibly utilize CPNI, can emerge as leaders in AI by offering personalized, context-aware services, thereby sidestepping the pitfalls that previously hindered efforts to monetize CPNI, such as location-based advertising that led to FCC fines [13].

2. Background and Related Work

Transformer Architecture and Scaling Laws

Modern LLMs are based on the Transformer architecture (Vaswani et al., 2017), which introduced self-attention mechanisms that allow for efficient modeling of long-range dependencies in sequences. Transformer-based models have demonstrated that increasing scale parameters and data size leads to improved performance across various tasks. Scaling laws (Kaplan et al., 2020; Hoffmann et al., 2022) formalize this by demonstrating how test loss improves predictably as a power law of model size and data, given a fixed computational budget. The Chinchilla scaling analysis (Hoffmann et al., 2022) specifically suggested that many early LLMs were under-trained on data relative to their size, and it recommended an optimal ratio of data to parameters for a given compute budget[14]. Recent advances, such as Farseer (Li et al., 2025), extend these laws to include post-training scaling through model distillation and iterative retraining.

However, these scaling laws often assume the availability of abundant, high-quality data. In practice, LLM developers have nearly exhausted accessible web text sources, such as Common Crawl, Wikipedia, and news archives. As noted in data management surveys, simply adding more of the same web data results in diminishing returns once the model has learned the dominant patterns[1]. Additionally, web data contains biases, such as over-representing certain demographics and topics, and becomes stale, with models trained on 2022 data struggling with events in 2023–2025. These issues limit personalization and temporal relevance. Research into LLM data management emphasizes the importance of data freshness, quality, and diversity as critical next steps [15][16]. This context motivates a shift toward fresh, dynamic data sources, such as CPNI, to continue scaling effective training. CPNI offers an entirely different data modality, one based on behavior rather than text that obeys new statistical patterns that can complement text-based knowledge.

Inherited vs. Experiential Learning

The debate between Sutton and Trask highlights two paradigms of intelligence: experiential learning, which involves direct trial-and-error in the world, and inherited learning, which involves absorbing accumulated knowledge. Sutton emphasizes the first point, noting that agents must learn from outcomes. Trask counters that inheritance is computationally indispensable: by training on text, models inherit compressed evolutionary and cultural optimization rather than re-deriving it from scratch. AI’s future requires both. Inherited knowledge offers efficiency and breadth; experiential signals provide grounding and adaptability. Current LLMs have excelled at broad listening to inherited text, but their lack of outcome-linked feedback leaves them bound to shadows. Conversely, CPNI delivers rich experiential grounding but without the full semantic inheritance of civilization. This paper situates CPNI within this duality: not as a replacement for inheritance, but as its necessary complement.

Ambient and Agentic AI

Ambient AI refers to intelligence that is pervasively present in the environment, operating “always-on” in the background to assist users in a context-aware manner. It involves the continuous perception of context through embedded sensors and data streams, and often involves anticipatory actions. For example, an ambient AI system in a smart home might automatically adjust heating and lighting by learning the occupant’s daily routine. Agentic AI, on the other hand, refers to AI systems that possess agency, enabling them to set goals, formulate plans, and take autonomous actions in the world (beyond merely responding to queries). This includes proactive decision-making agents, personal assistants that not only answer questions but initiate helpful tasks, or network management AIs that dynamically reconfigure systems to optimize outcomes.

Traditional LLMs, when used in tools like chatbots, have been largely reactive (responding to user prompts) rather than agentic. Techniques like Retrieval-Augmented Generation (RAG) or tool use via prompts can create an illusion of agency but are ultimately driven by user input. Truly agentic AI would require ongoing sensorimotor interaction with the world or streams of events to monitor and respond to. This is where CPNI can play a pivotal role. Telecom data provides a continuous timeline of user behavior (calls made, locations visited, data consumed) that an AI agent can leverage to anticipate needs or detect anomalies. For ambient AI, CPNI is a rich context source: e.g. the network can infer if a user is commuting (via cell tower handoffs) and trigger location-based reminders or adjust smart home settings accordingly (since it knows the user is away). For agentic AI, CPNI provides real outcome signals (did the user answer a call? Did they move to a new location? Was a network intervention successful?) This is far more reliable than sporadic signals, such as smartphone app events or coarse GPS pings.

Notably, initial research is emerging on combining mobility data with LLMs. For instance, Mobility-LLM (Gong et al., 2024) introduced a framework to analyze human check-in sequences (i.e., location “check-ins” at venues) using LLMs by encoding them as prompts [17]. This approach improved tasks such as next location prediction by incorporating semantic understanding of human mobility patterns. Another study by Liu et al. (2024) used an LLM to generate daily activity chains for individuals with minimal demographic data, thereby simulating plausible mobility patterns without requiring large, proprietary datasets [18][19]. These works underscore that LLMs augmented with behavioral data can bridge the gap between language and action, aligning with Sutton’s call for experience-based learning. CPNI provides a real-world, real-time feed of such behavioral data at a massive scale, making it a prime candidate to drive ambient and agentic capabilities in AI systems.

Regulatory Landscape for CPNI

Using CPNI for anything beyond core telecom services immediately raises legal and ethical considerations. In the United States, 47 U.S.C. §222 (part of the Communications Act) and the FCC’s implementing regulations establish a strict privacy regime for CPNI. Essentially, carriers are prohibited from using or disclosing individually identifiable CPNI for non-core purposes without the customer’s explicit consent. [2] Acceptable uses without additional consent are limited to provisioning the telecom service itself, billing and collection, fraud prevention, and emergency responses, such as 911 calls. [20][21] Any other use – such as marketing or building AI models - generally requires opt-in consent or must involve de-identified (aggregated) data only. [22] The FCC has actively enforced these rules. A notable example occurred in 2024, when the FCC imposed nearly $200 million in fines on major U.S. wireless carriers for sharing customer location data with third-party aggregators without obtaining proper consent. [6] The carriers enabled real-time location lookups, which ultimately fell into the hands of bounty hunters and other unauthorized parties, thereby violating customer privacy. FCC Chair Jessica Rosenworcel emphasized the importance of such data: “In the wrong hands, it can provide those who wish to do us harm the ability to locate us with pinpoint accuracy”[13]. This case demonstrates that sharing CPNI with “trusted” partners can lead to liability if those partners misuse it, as carriers remain responsible for downstream misuse, and contractual clauses will not shield them from regulatory action [23].

Beyond FCC rules, overlapping data privacy frameworks from State jurisdictions come into play. The California Consumer Privacy Act (CCPA), as amended by the California Privacy Rights Act (CPRA), imposes transparency and purpose-limitation requirements on the use of consumer data. Its upcoming regulations on Automated Decision-Making Technology will likely require algorithmic impact assessments and bias audits for high-risk AI systems [24]. In the telecom AI context, this means that if CPNI is used to drive automated decisions (e.g., determining a customer’s eligibility for an offer or flagging potential fraud), those decisions must be explainable and regularly audited for fairness and potential disparate impact. GDPR in Europe, while not directly regulating U.S. telcos’ domestic data, influences best practices. It requires a lawful basis for processing personal data and has strict rules for using sensitive data and profiling. Therefore, any global carrier would need to consider the GDPR if CPNI data of EU residents is involved (e.g., roaming data).

Furthermore, at least two U.S. states have enacted laws specifically addressing AI accountability: Colorado’s AI Act (SB 21-205, 2024) and a similar law in California in 2023. The Colorado law requires developers and deployers of high-risk AI systems to implement transparency and oversight measures to ensure accountability. For example, if a telecom uses an AI model (trained on CPNI) to make significant decisions about a customer (like throttling their data or flagging an account for review), the law mandates that the customer be informed that an AI was involved and given the chance to opt out or appeal to a human[25][26]. It also requires detailed documentation of the AI system’s purpose, training data, and the steps taken to reduce algorithmic bias [27][28]. Failure to comply could lead to enforcement by the state Attorney General. FTC Section 5 (unfair or deceptive acts/practices) is another broad regulation: if a carrier claims “Your data is safe” but then uses CPNI in an unclear manner, the FTC may consider it a deceptive practice. Overall, the regulatory landscape requires purpose-specific use, user consent or opt-out options, strong security, and oversight for any CPNI-powered AI project. Any framework for CPNI-native LLMs must therefore integrate compliance from the beginning, not as an afterthought.

Critiques of Text-Only LLM Training

Text-only training of LLMs has achieved remarkable feats, yet it faces criticism for fundamental limitations. We discussed Richard Sutton’s perspective in the Introduction: he argues that LLMs trained solely on internet text lack a true understanding of the world. They don’t interact with the environment and thus cannot ground their knowledge in outcomes or causal relationships; they only learn correlations in language. [8] Sutton emphasizes that an ideal intelligent agent requires continual learning with feedback (the agent takes an action, the world responds, and the agent updates), but LLMs have no way to receive feedback on their outputs once training ends [29][30]. Essentially, current LLMs are stuck in a passive mode: they predict but do not experiment. This makes them prone to confidently asserting falsehoods (hallucinations), since they’ve never empirically tested their “beliefs” in a real environment. Moreover, they lack intrinsic goals or rewards. An LLM doesn’t want anything or try to achieve objectives, unless those are artificially introduced through prompting or fine-tuning.

The X post by the analyst @teortaxesTex (2025) expands on this, noting that LLMs operate on “language-world models.” They have representations of the world, but those representations are formed from textual descriptions by humans, not from the AI’s own sensorimotor experience. It’s similar to Plato’s allegory of the cave: the LLM sees shadows (text descriptions) of the real objects, but not the objects themselves[31]. As a result, when the real world doesn’t match the text it was trained on, an LLM could be caught off-guard – an ontological crisis for the model’s understanding. For example, an LLM might know from text that “glass is fragile,” but without ever dropping a glass, it has no direct experience of the shattering process or the associated sound, limiting its understanding to the words “glass breaks.” This purely language-based model also inherits all the biases and blind spots of its training data. If certain behaviors or populations are underrepresented in the text (or only depicted in biased ways), the LLM’s world model will reflect that. For instance, an LLM might lack accurate data on rural phone usage patterns if most of its training data comes from urban-centric tech articles, which could result in poor performance on applications relevant to underrepresented contexts.

Researchers have been investigating grounded and multimodal training methods to fill these gaps. Some efforts involve training language models with images or videos, enabling the model to associate words with pixels and gain grounding. Others include studies on mobility data and robotics-focused LLMs that connect to sensor data. These efforts show that providing models with real-world data, whether visual, auditory, or CPNI, can significantly enhance their ability to make accurate predictions. Sutton advocates returning to reinforcement learning (RL), a training approach that involves trial-and-error with a reward signal, as a promising direction for AI [32]. Traditional RL relies on simulated environments or games; our proposal with CPNI suggests that the real-world telecom network and user base can serve as both the environment and the reward source for learning (e.g., a model earns rewards for reducing dropped calls or extending users’ battery life). This combination of LLM and RL on real data could lead to the development of systems that not only mimic human language but also develop an understanding of cause and effect. Overall, critiques of text-only LLMs emphasize the importance of grounding, interactivity, goals, and continuous learning, features that the CPNI-native approach aims to incorporate by shifting training data from static texts to live behavioral streams.

3. CPNI as a Training Substrate

Definition and Properties of CPNI

Customer Proprietary Network Information (CPNI) is defined in U.S. law as data collected by telecom providers about how their subscribers use telecommunications services. This includes details such as the time, duration, and destination of phone calls, the device’s location during calls (based on cell tower connections), the type and amount of services used (for example, data consumption and SMS messages), and billing information[33]. Importantly, it is personally identifiable and linked to a specific customer, which triggers its protected status. For example, when you make a phone call, the carrier records metadata: your phone number, the number you dial, start and end times, and the cell towers used (providing an approximate location). Likewise, as your phone moves, it connects to different cell towers (“handoffs”), and when you use mobile data, the carrier logs how much data you use and possibly which apps or domains (at least at a category level for network management). All of this is CPNI. It does not include the content of your communications (such as call audio or SMS text, which are protected by other privacy laws). CPNI is sometimes referred to as the exhaust of the telecom network: a valuable byproduct of network operation that, in aggregate, maps out users’ digital lives.

The properties of CPNI make it especially attractive as a machine learning foundation:

Passive and Automatic Generation: Users don’t need to take any extra action; whenever they use their phone, CPNI data is generated. This enables large-scale collection. A carrier with 100 million subscribers could record billions of data points daily. Unlike curated text, which requires authorship or labeling efforts, CPNI is automatically produced during everyday operations.

Temporal Precision and Continuity: CPNI events are timestamped to the millisecond and occur in a continuous stream of data. This temporal accuracy allows models to learn sequences and rhythms of behavior, such as daily routines and weekly patterns, with high precision. The data is effectively real-time. While a language model might only receive fresh training data during a new web crawl or dump, perhaps monthly or quarterly, a CPNI-based model could be updated daily or even intra-day as new data becomes available.

Spatial Richness: Location handoffs and cell tower IDs provide approximate geolocation data, often accurate within a few hundred meters in urban areas with dense cell tower coverage. Over time, the sequence of tower connections can map out a user’s travel routes. This adds a spatial dimension to the model’s understanding of the world, enabling it to learn, for example, commute distances, typical times spent at work versus home, and even detect anomalies, such as a device suddenly appearing in a new city. Such spatial-temporal data is crucial for context-aware services, like suggesting an earlier departure for work based on traffic patterns, if the model recognizes a usual route and checks external data.

Outcome-Linked and Task-Relevant: Many CPNI elements naturally encode outcomes. A call either connects or drops, revealing success or failure. A customer may reach out to support after a network event, signaling dissatisfaction. These implicit labels help AI learn which patterns lead to good or bad results. This differs significantly from web text, where the “label” is merely the next word in a sentence, not tied to real-world outcomes. In network optimization, for example, CPNI might indicate that connections to Tower X at 6 PM consistently experience low throughput. An AI could detect this pattern and proactively allocate more bandwidth or reroute traffic, using CPNI to inform predictive maintenance and optimization.

Longitudinal and Cross-Context: Because telecom services are continuous, CPNI provides a persistent record of behavior. It links contexts that usually remain separate, revealing work habits through weekday call volumes, social habits through evening SMS patterns, entertainment preferences through data spikes, and mobility through regular travel or roaming. Web text rarely provides this holistic view, since a forum post does not automatically connect to activity in other domains. CPNI, keyed by subscriber, unifies these threads and enables models to understand users across multiple contexts. This comprehensive view supports more accurate personalization and multi-domain reasoning.

CPNI is abundant, detailed, and rich in behavioral insight. It functions as the counterpart to language data: while text captures what people say, CPNI captures what they do. This makes it especially powerful for revealing preferences and needs that are often not explicitly stated. For instance, someone might not explicitly state, “I get coffee on the way to work,” but their CPNI indicates a daily pause near a café. An AI trained on such patterns could anticipate this behavior and provide timely support, like an outage alert or a nearby promotion. These capabilities arise from CPNI’s unique value as a training foundation.

Conclusion

The Allegory of the Cave reminds us that shadows alone are insufficient. Today’s LLMs, mainly trained on web text, are powerful but still rely on imitation rather than genuine experience. CPNI provides a solution: continuous behavioral signals connected to real outcomes. Meanwhile, inheritance remains essential since civilization’s accumulated knowledge is a valuable computational resource that must be built upon beyond public internet content.

The future depends on systems that combine both. CPNI offers experiential grounding, allowing models to learn from real-time behavior. Inherited institutional knowledge encapsulates the wisdom of human history, enabling scaling without redoing evolution. Intelligence progresses most rapidly when inheritance and experience come together.

With privacy and governance built in, telecom carriers and AI providers can lead the next wave of ambient and agentic intelligence. Leaving Plato’s Cave is no longer just a metaphor; it is the crucial step toward creating AI that perceives the world as it truly is.

SOURCES

[1] From Scaling Law to Sub-Scaling Law: Understanding the ...

https://openreview.net/forum?id=LJ1zlaGdPm

[2] [3] [4] [20] [21] [22] [33] 47 U.S. Code § 222 - Privacy of customer information | U.S. Code | US Law | LII / Legal Information Institute

https://www.law.cornell.edu/uscode/text/47/222

[5] Artificial Intelligence in Call Centers: How AI is Used in Call & Contact Centers

https://www.operativeintelligence.com/blog/artificial-intelligence-call-center

[6] [13] FCC fines US wireless carriers over illegal location data sharing | Reuters

[7] [9] [10] Richard Sutton Says Scaling LLMs Won’t Necessarily Lead To Intelligence

https://officechai.com/ai/richard-sutton-says-scaling-llms-wont-necessarily-lead-to-intelligence/

[8] [11] [14] [29] [30] Richard Sutton – Father of RL thinks LLMs are a dead end

[12] [32] Dwarkesh Patel argues with Richard Sutton about if LLMs can reach AGI : r/singularity

[15] [PDF] NOT ALL LLM-GENERATED DATA ARE EQUAL - OpenReview

https://openreview.net/notes/edits/attachment?id=un7GalaBCY&name=pdf

[16] Data Management For Large Language Models: A Survey

https://arxiv.org/html/2312.01700v2

[17] Mobility-LLM: Learning Visiting Intentions and Travel Preferences from Human Mobility Data with Large Language Models

https://arxiv.org/html/2411.00823v1

[18] [19] Human Mobility Modeling with Limited Information via Large Language Models

https://arxiv.org/html/2409.17495v1

[23] Top US mobile carriers fined $200m by FCC over illegal location-data sharing | Business | The Guardian

https://www.theguardian.com/business/2024/apr/29/fcc-fines-wireless-carriers-t-mobile-verizon

[24] California’s New Privacy and Cybersecurity Regulations on Risk ...

[25] [26] [27] [28] Colorado’s Landmark AI Act: What Companies Need To Know | Insights | Skadden, Arps, Slate, Meagher & Flom LLP

https://www.skadden.com/insights/publications/2024/06/colorados-landmark-ai-act

[31] Shadow-Cave Models: How Plato’s Allegory Illuminates Limitations ...